Still can degrade to O(n^2).Ĭhoose the pivot randomly (using a custom built random function): Harder for someone to construct an array that will cause it to degrade to O(n^2)Ĭons: Selecting a random pivot is fairly slow. Fairly easy for someone to construct an array that will cause it to degrade to O(n^2)Ĭhoose the pivot randomly (using built in random function): Pros: Fairly simple to code, reasonably fast to calculate, but slightly slower than the above methodsĬons: Still can degrade to O(n^2). Easy for someone to construct an array that will cause it to degrade to O(n^2) Pros: Simple to code, fast to calculate, but slightly slower than the above methodsĬons: Still can degrade to O(n^2). What are some techniques to choose a pivot ?Ĭhoose the left most or rightmost element.Ĭons: If the data is sorted or nearly sorted, quick sort will degrade to O(n^2) (A classic case is when the first or last element is chosen as a pivot and the data is already sorted, or nearly sorted) This happens if the pivot is consistently chosen so that all (or too many of) the elements in the array are than the pivot. On average quick sort runs in O(n log n) but if it consistently chooses bad pivots its performance degrades to O(n^2) Pivot selection is an important part of quick sort and there are many techniques, all with pros and cons.

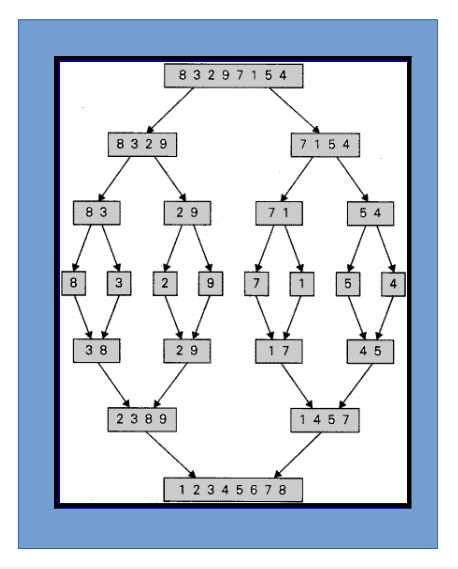

A single p and r pair point at the fifth element. The array elements are still ordered as.A p and r pair point at the last element. The first p points at the fifth element, the first q and first r point at the sixth element. The fourth p and a q point at the ninth element, and the fourth r points at the last element. The third p points at the fifth element, a q and the third r points at the seventh element. The first p and r pair point at the first element, the second p and r pair point at the third element. The array elements are now ordered as.The second p points at the fifth element, the second q points at the eighth element, and the second p points at the final element. The first p points at the first element, the first q points at the second element, the first r points at the third element. The array now has multiple indices named p, q, and r. The array now has an index q pointing at the fourth element containing the value 6. The array starts off with elements, with index p pointing at the first element and index r pointing at the last element.Thus, making this strategy suited for parallel execution. In the divide and conquer strategy we divide problems into subproblems that can be executed independently from each other. The reason for this is the fact that when the subproblems become simple enough, they can be solved within a cache, without having to access the slower main memory, which saves time and makes the algorithm more efficient.Īnd in some cases, it can even produce more precise outcomes in computations with rounded arithmetic than iterative methods would. In fact, it played a central role in finding the quick sort and merge sort algorithms. However, with the divide and conquer method, it reduces the degree of difficulty since it divides the problem into easily solvable subproblems.Īnother advantage of this paradigm is that it often plays a part in finding other efficient algorithms. The first, and probably the most recognizable benefit of the divide and conquer paradigm is the fact that it allows us to solve difficult, sometimes even NP problems.īeing given a difficult problem can often be discouraging if there is no idea how to go about solving it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed